Many people approach AI music with the wrong expectation. They imagine a single prompt, a single button, and an immediate final song. That idea is appealing, but it does not describe how strong creative outcomes usually happen. Better results tend to come from a loop: describe, generate, listen, notice what is missing, revise, and generate again. That is why AI Music Generator makes more sense as an iterative environment than as a one-shot machine. The official pages do not hide that logic. They repeatedly point users toward choosing modes, selecting models, adding more precise style details, and regenerating when the output is not yet right.

That is a healthier way to understand the platform. Instead of asking whether it can read your mind on the first attempt, a better question is whether it helps you refine your intention quickly. In my view, that is the more important standard. A good creative tool does not need to be perfect immediately. It needs to make the next decision clearer.

Table of Contents

Why Listening Is Part Of Writing The Prompt

Text prompts in music generation are often treated like commands. In practice, they are more like hypotheses.

You think a track should feel warm, but maybe what the project really needs is restraint. You think the chorus should sound large, but perhaps the lyrics work better with something more intimate. You may ask for a cinematic build and discover that the visual project actually becomes stronger with less drama. These are not failures. They are discoveries.

That is why iteration matters. The first output gives shape to your assumptions. Once you hear the result, your language improves. You stop writing generic requests and start writing musical direction with consequences.

ToMusic’s official FAQ supports that reading directly. It states that users can adjust prompts, try a different model, refine lyrics, or add more precise style tags if they do not like the initial result. That framing tells us something useful: the platform is designed around refinement, not blind submission.

How The Official Interface Encourages Revision

The structure of the product makes the iterative logic visible.

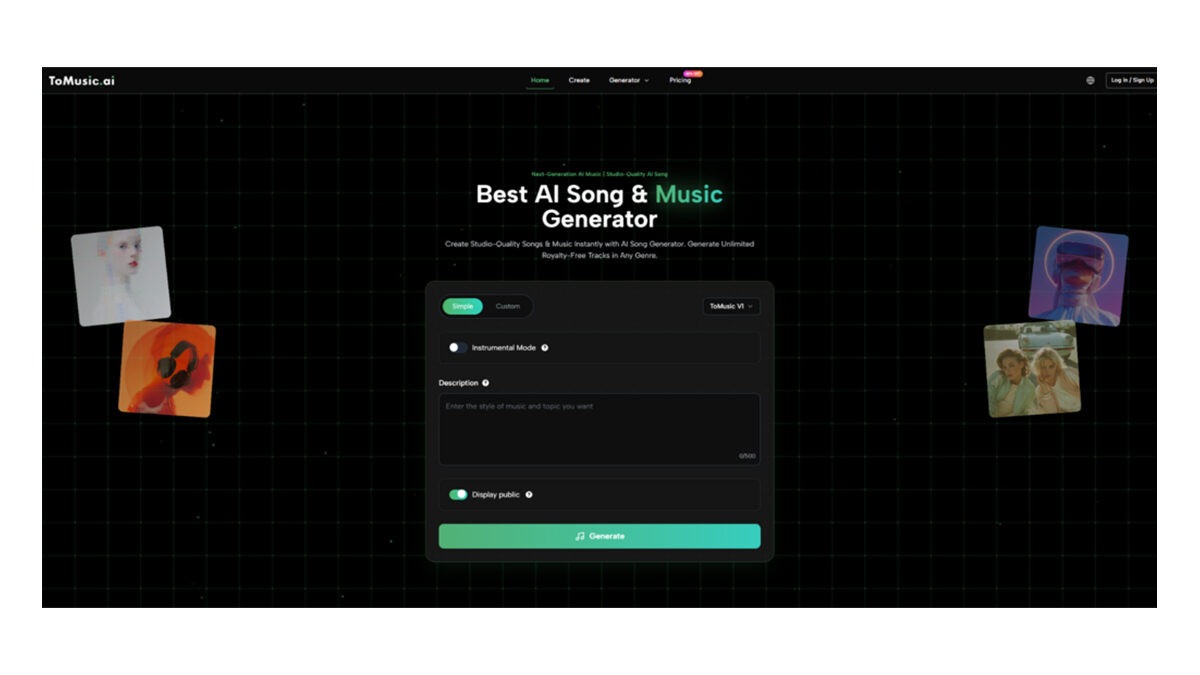

Simple Mode Helps You Test A Direction Quickly

Simple mode is useful when you are still defining the space of the song. You do not need to overbuild your first idea. You can write a descriptive prompt, hear how the system responds, and then decide which parts of the idea deserve more control.

This is especially practical in the early stage of creative work. A rough audio answer often clarifies the next step faster than a longer planning session.

Custom Mode Lets Revision Become Precise

Once the idea is clearer, Custom mode becomes more valuable. The fields for title, styles, and lyrics let the user direct the system with more intention. At that stage, the goal is usually not broad discovery. It is controlled change.

You may keep the same lyrics and shift the style. You may preserve the style but rewrite the chorus. You may keep both and change the model. Revision becomes structured rather than random.

Instrumental Option Prevents Unnecessary Complexity

A lot of prompts fail because they try to do too much. If the project does not need vocals, starting in instrumental mode removes one entire layer of difficulty. That can make the outcome easier to judge because you are listening for texture, motion, and mood rather than also evaluating lyric delivery.

Why Model Changes Are A Form Of Editing

One of the most useful parts of ToMusic is that it offers four models with distinct roles rather than one generic engine. According to the official FAQ, V4 leans toward genuine vocals and creative control, V3 toward rich harmonies and innovative patterns, V2 toward extended compositions and tonal depth, and V1 toward balanced performance with a simpler path.

That means revision does not always require rewriting the prompt. Sometimes the most effective edit is changing the model.

| Revision problem | Possible platform response | Why it can help |

| Output feels too basic | Try a model aimed at richer harmonies | Arrangement depth may improve |

| Song needs more vocal presence | Move toward the model focused on vocals | Delivery may feel more expressive |

| Piece needs longer development | Choose the model associated with extended compositions | Structure may breathe more naturally |

| Early draft is taking too long to evaluate | Use the more streamlined option first | Faster direction-finding |

This is one reason the platform feels more practical than a simple prompt box. It gives users more than one way to revise. In creative work, having multiple revision levers is often the difference between progress and frustration.

A Four Step Loop Based On The Official Workflow

The official process is straightforward, but its real strength appears when you treat it as a cycle instead of a line.

Step One Enter The First Clear Version Of The Idea

Start with either a descriptive prompt or a lyric-based concept. The goal is not to write the perfect instruction. The goal is to give the system enough direction to reveal the musical shape of your idea.

Step Two Choose The Most Appropriate Setup

Decide whether the piece should be instrumental or lyric-driven. Then choose the model whose stated strengths best match what matters most in the track.

Step Three Generate And Listen Critically

Do not only ask whether the result sounds “good.” Ask whether it solves the problem. Is the energy right? Does the pacing fit? Are the words too crowded? Is the emotional tone closer or farther than expected?

Step Four Revise With Specific Intent

Change one or two meaningful things. Adjust the style tags. Refine the lyrics. Shift the model. Clarify mood, tempo, instrumentation, or vocal direction. Then generate again and compare.

How Better Iteration Produces Better Prompts

Users often think good prompts are written before the first generation. Usually they are written after it.

The first output teaches you where your language was weak. Maybe “cinematic” was too broad. Maybe “sad” produced something flatter than “restrained and reflective.” Maybe the tempo felt wrong because the emotional target was actually urgency rather than excitement. A useful platform teaches users how to speak more musically by making the consequences of vague language audible.

That is one of the most underappreciated aspects of AI music creation. The tool is not just generating songs. It is training users to describe music more clearly.

Why This Matters For Different Types Of Creators

The iterative model fits several kinds of real work better than the fantasy of instant perfection.

Editors Need Comparative Listening

A video editor may want to hear how the same visual sequence feels with different musical temperatures. One track may lean too sentimental. Another may be too energetic. A third may finally match the pacing of the cut. Iteration is what gets them there.

Writers Need To Hear What Their Lyrics Actually Do

Words on a page can feel strong until they are sung. A line may sound too long, too stiff, or too literal in performance. Fast regeneration helps writers tighten language through listening rather than guesswork.

Marketers Need Fast A B Direction Testing

Campaign work rarely stops at one version. Teams often compare moods, tonal positioning, and energy levels. A platform that supports regeneration and variation makes that process faster.

How Lyrics Become Part Of The Revision Loop

Lyrics change the nature of iteration because the user is now revising not only sound but language.

When words enter the process, you are no longer only testing genre or instrumentation. You are testing line length, phrasing, emotional clarity, and hook strength. Sometimes a lyric that reads well is too dense to sing naturally. Sometimes a chorus needs more repetition than the writer expected. Sometimes a small wording change transforms the musical flow.

This is where Lyrics to Music AI becomes especially interesting. It lets lyric writers hear their text as part of an evolving musical draft rather than as a static document waiting for future production. That can change the writing itself. Once the writer hears the line inside the song, they can decide whether to simplify, intensify, or restructure it.

What The Commercial And Output Features Add

The official materials also point to royalty-free licensing, commercial usage rights, downloadable WAV and MP3 files, and certain plan-level functions such as stem extraction and vocal removal. Those details matter because iteration becomes more valuable when the resulting files can move into real workflows.

A creator may generate several versions, download the most promising one, and use it immediately in editing. Another may keep it as a scratch track for client review. Another may use it as a structural demo before rebuilding parts elsewhere. The point is that the iteration loop does not end inside the platform.

Where People Usually Misjudge Tools Like This

A more realistic view of the platform comes from noticing the wrong assumptions users often bring to it.

Mistake One Treating The First Output As A Verdict

The first result rarely tells you the full potential of the idea. It tells you where to aim next.

Mistake Two Revising Everything At Once

If the output is off, changing every variable makes learning harder. In my view, better iteration often comes from keeping some things stable while changing one important element, such as the model, the style framing, or the chorus wording.

Mistake Three Confusing Speed With Shallowness

Fast Revision Can Still Produce Serious Creative Insight

Because the process is faster, some people assume it must also be less thoughtful. That is not necessarily true. Speed can actually create space for more reflection because you spend less time waiting and more time comparing.

Why Iteration Is The Real Product

The deepest value of ToMusic is not that it can output music from text. Plenty of people already know that AI systems can do that. The deeper value is that it makes musical iteration accessible to people who are not traditional producers.

That changes the creative rhythm. Instead of waiting until the entire idea is technically buildable, users can test it early. Instead of arguing abstractly about mood, they can compare audible versions. Instead of assuming their lyrics work, they can hear them. Instead of locking into a single direction too soon, they can revise with more precision.

In other words, the platform is not only giving users songs. It is giving them a faster loop for discovering what they actually mean. For anyone making music-adjacent decisions, that may be the most important thing it does.