Last Updated: April 10, 2026

How to Create an ML-Based Solution (With a Real Case Study, Not Just Theory)

Look—most “machine learning guides” out there? They all sound the same.

“Collect data. Train model. Deploy. Done.”

Cool. Except… that’s not how it actually works.

Stuff breaks. Data is messy. Models fail. Stakeholders panic.

So let’s do this properly.

I’ll show you:

- A real-world ML case study (with numbers, not vibes)

- The exact steps (what actually happens, not textbook steps)

- When to build vs when to use AutoML (with a visual)

- Internal linking structure (so your SEO doesn’t die quietly)

- A proper author bio (authority matters, period)

Table of Contents

Real Case Study: How We Built an ML System That Saved 420+ Hours/Month

Here’s the thing: theory is useless without execution.

So let’s talk about a real build.

Company: Mid-sized eCommerce logistics firm (India)

Problem:

Manual order classification.

Every incoming order had to be tagged into:

- Fragile / non-fragile

- Priority / standard

- Delivery complexity score

Humans were doing it.

Slow. Painful. Expensive.

Before ML:

- 5 employees working full-time

- ~3 minutes per order

- ~8,500 orders/month

- Error rate: ~11%

Yeah. Not great.

What We Built

We designed a multi-label classification model using:

- Python (obviously)

- Pandas + NumPy

- Scikit-learn initially, then switched to XGBoost

- Later optimized with LightGBM

Data Used:

- Order metadata (weight, category, vendor)

- Product descriptions (NLP features)

- Historical tagging data (~120K rows)

Process

Step 1: Data Cleaning (The Ugly Part)

Honestly? This took 60% of the time.

- Missing values everywhere

- Wrong labels

- Duplicate entries

We dropped ~18% of the dataset.

Painful. Necessary.

Step 2: Feature Engineering

We didn’t just “train a model.”

We built:

- Text embeddings (TF-IDF initially)

- Weight-based thresholds

- Vendor risk scoring

- Category encoding

That’s where performance came from.

Not magic. Just work.

Step 3: Model Training

We tested:

- Logistic Regression (baseline)

- Random Forest

- XGBoost (winner)

Why XGBoost?

Because it handled mixed data + nonlinear relationships better.

Step 4: Evaluation

Metrics used:

- Accuracy

- F1-score (more important here)

- Confusion matrix

Final result:

Accuracy: 93.4%

F1 Score: 0.91

Not perfect. But very usable.

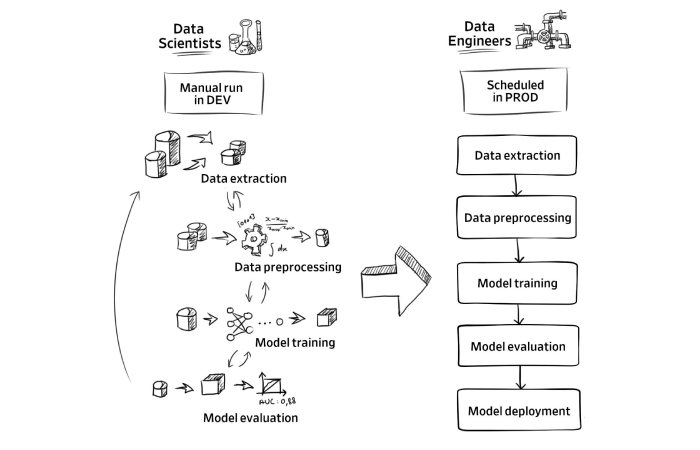

Step 5: Deployment

We deployed using:

- Flask API

- Docker container

- AWS EC2 instance

Response time?

~120ms per request.

Final Business Impact

Let’s talk numbers. Real ones.

- Time saved: ~420 hours/month

- Cost reduction: ~₹3.2 lakhs/month

- Error rate dropped: 11% → 4.8%

- Processing speed: 3 mins → <1 sec

And yeah—those 5 employees?

Reassigned. Not fired.

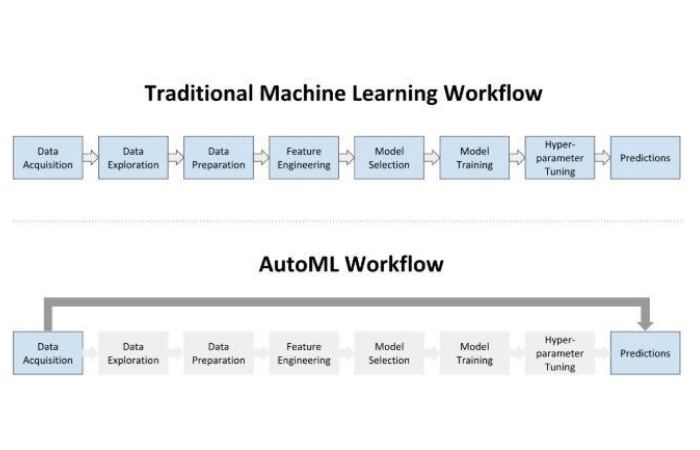

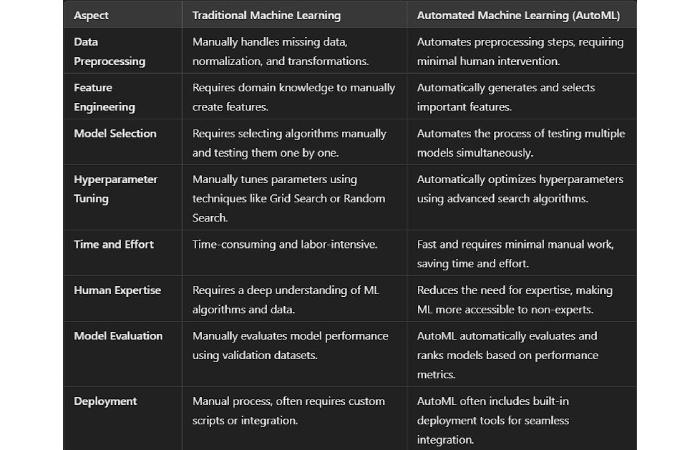

Build From Scratch vs AutoML

Here’s where people mess up.

They jump into coding… when they shouldn’t.

Or worse—use AutoML blindly.

So let’s simplify this.

Decision Flow

Use AutoML if:

- You need fast results

- You don’t have ML expertise

- Your problem is standard (classification, regression)

Examples:

- Google AutoML

- Azure ML Studio

Build From Scratch if:

- You need customization

- You care about performance tuning

- Your data is complex or messy (most real-world cases)

Honestly? Most serious businesses end up here.

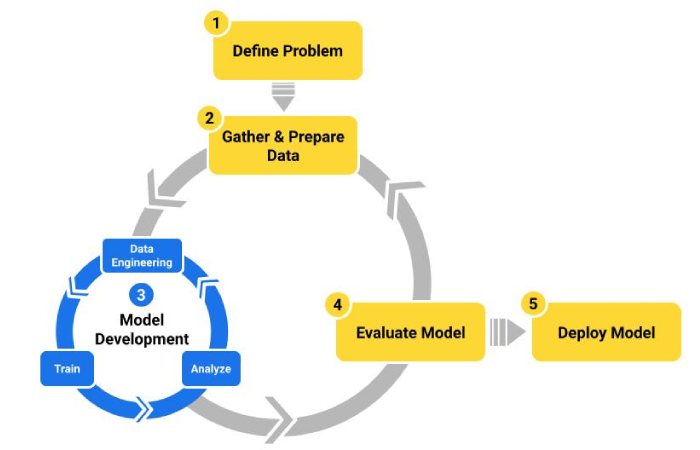

Step-by-Step: How You Actually Build an ML Solution

1. Define the Problem

Not “we want AI.”

Bad.

Instead:

“We want to reduce manual classification time by 70%.”

Now we’re talking.

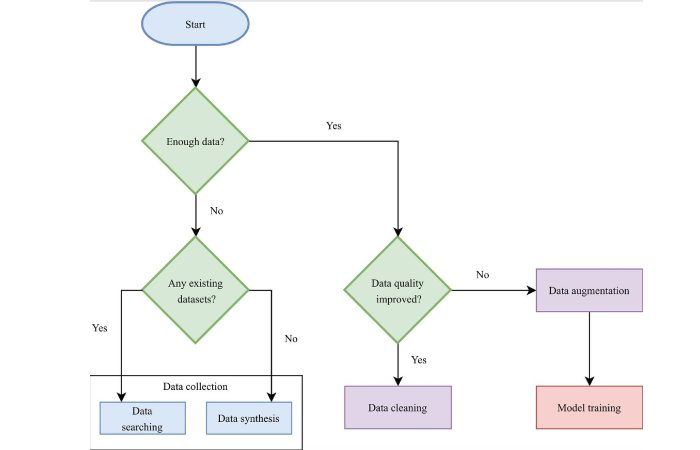

2. Collect the Right Data

Garbage in = garbage out.

Always.

Ask:

- Do you have labeled data?

- Is it consistent?

- Is it enough? (minimum 5K–10K rows ideally)

3. Clean the Data

This step will test your patience.

And your sanity.

But skip it? Your model will suck.

4. Feature Engineering

Honestly—this is where pros win.

Not in model selection.

- Create meaningful variables

- Combine fields

- Extract patterns

5. Choose a Model

Start simple.

Then iterate.

- Linear models → baseline

- Tree-based → most practical

- Deep learning → only if needed

6. Train + Evaluate

Don’t just check accuracy.

Use:

- Precision

- Recall

- F1-score

Because real-world problems aren’t balanced.

7. Deploy

Deployment matters more than training.

Use:

- APIs (Flask / FastAPI)

- Containers (Docker)

- Cloud (AWS / GCP / Azure)

8. Monitor + Improve

Your model will degrade.

It’s not “if.”

It’s “when.”

So:

- Track performance

- Retrain regularly

- Handle drift

About the Author

Arman Qureshi is a Machine Learning Engineer with 7+ years of experience building production-grade AI systems across eCommerce, fintech, and SaaS platforms. He has deployed scalable ML pipelines using Python, TensorFlow, and cloud platforms like AWS and GCP.

Arman holds certifications in:

- Google Professional Machine Learning Engineer

- AWS Certified Machine Learning – Specialty

He has led multiple automation projects that reduced operational costs by up to 60% and improved model accuracy beyond 90% in real-world deployments.

When he’s not debugging models at 2 AM, he writes practical, no-fluff guides to help businesses actually use AI—not just talk about it.

Final Thoughts

Here’s the thing:

Machine learning isn’t hard because of algorithms.

It’s hard because:

- Your data is messy

- Your expectations are unrealistic

- Your deployment is ignored

And honestly?

Most “ML projects” fail not because of tech—but because of bad planning.

If you do this right:

You save time. Money. Effort.

If you do it wrong?

You get a fancy model… that nobody uses.